An AI project can be thought of as a ‘container’ where all the settings and information needed to build an AI are held.

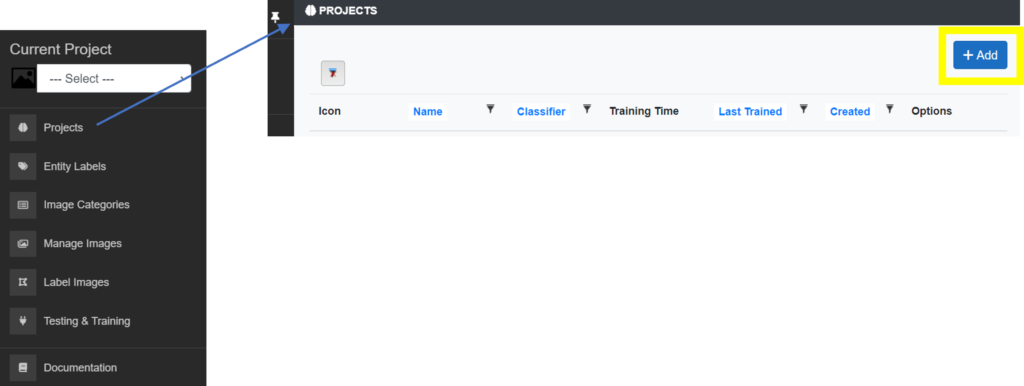

Once you have created one or more AI projects these will become available in the project selector located in the main left menu.

To create a project select the ‘Projects’ menu item in the left main menu. This will load your available project, if any. At the top right of the project listing page select the ‘Add’ button as shown in the screen shot below.

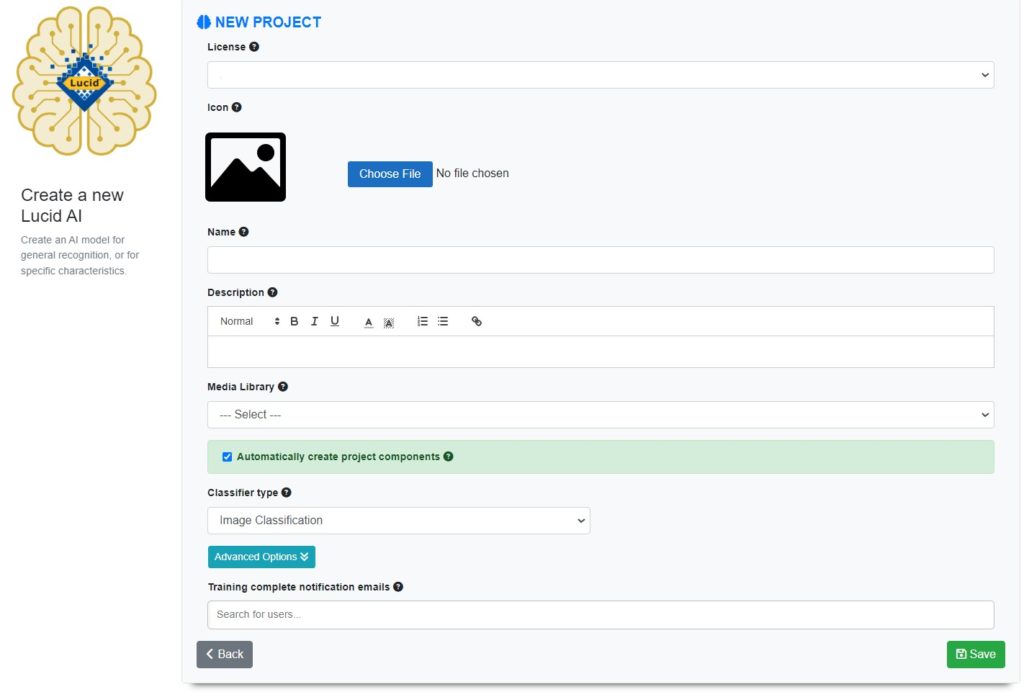

The following are options related to creating or editing a project.

Licenses

Lucid AI projects can only be created within the confines of a licence. A licence defines how many projects you may be able to create and what resources are available to it such as Media Libraries. A licence also allows the management of users within your organization or team members that have access to your project and their roles within it.

Choose the license (if multiple licenses are available to you) you wish to create your project under.

Note

Your licence must have an available AI Project instance available to create a new project.

Title

The title of your project is to help you, your team members and potential users identify your project. It is also used when displaying the AI as a service, if the AI is made publicly accessible.

Icon

Allows you to associate an image that represents your AI project. This along with the title and description will be used in the listing of the AI, if it’s made publicly visible. The image can be either a Jpeg or a PNG. The image will be automatically resized for saving with your project.

Description

Use the description field to outline what your AI objective is. Also detail any specific information users of your AI should know when interacting with your AI. For example, if you were building an AI to identify a genus of flies, did you train the AI with a specific set of images such as dorsal views only, where the user of the AI should submit images in the same view.

An example usage description – for users of your completed AI product:

Please submit images of the subject showing leaves, flowers, or fruit. Any view angle should be ok except from underneath. Ideally the photo will show the subject from 10-20 inches, not macro parts or distance shots of the subject. The subject should be in focus. The selected image regions should only include the one subject and exclude as much background as possible. The subject should not be a dried specimen, insect damaged, sprayed with water droplets or covered in snow or other contaminates such as dust and dirt. Hands and fingers should also be excluded as much as possible. For the latest taxa this AI has been trained on, please see this list.

Media library

Your AI project requires a Media Library to be associated with it. The Media Library provides all the image services the AI building process needs such as storage, image sizing and image augmentation. By utilizing a Media Library multiple projects (i.e. not just an AI project) can share the same set of images. For example, a Lucid key and an AI project can share the same set (or subset) of images. A Media Library provides a single ‘point of truth’ for your images such as labels, ownership, copyright and licence details.

You and any project team members must have at least read-only access to the selected Media Library associated with your AI project. If you have Editor or higher (E.g., Administrator) rights then you can add images to your Media Library, via the Lucid AI interface.

Tip

The Lucid AI application is not designed to manage your Media Library (i.e., edit/add categories and delete and move media around etc), this is done via the Media Library’s own user interface. Login to your Media Library application if you wish to manage it.

Automatically create project components

If your Media Library already contains categories that represent the entity labels (plant, insect, other taxa) you are interested in using within your AI project, then using this option can save a considerable amount of time setting up the components of your AI project.

For example, if your Media Library had been created from a Lucid key upload, the Media Library categories may already reflect the entities (taxa) you wish to use as AI entity labels and hopefully the contained images will be useful for AI training.

If this option is selected the AI project will be created based on the Media Library. The AI image categories will be automatically created to reflect the Media Library categories. All the images contained within the Media Library categories will be synced to the corresponding AI image categories and augmented, entity labels will be created based on the Media Library categories and each image will be automatically “whole image” labelled (annotated) ready for AI training.

Depending on how many categories and images are contained within the Media Library, this process can take anywhere from several minutes to several hours. Synchronizing can be time consuming because each image is specifically sized and augmented for your selected AI model type (See Classifier Type below for more information on model types).

Once the AI project setup has completed you will be notified via the application notifications and email.

Note

The original images contained within the Media Library are not modified in anyway when used within your AI project.

Classifier Type

There are two possible types of AI project classifiers, overall image classification and object recognition within an image. At the moment only overall image classification is available. Object detection is planned for the near future.

Advanced Options

Architecture type

Lucid AI supports several AI architectures, each with different advantages (size, speed, platform targets such as mobile devices).

ResNet V2 101

ResNet short for Residual Neural Network, is a family of network architectures for image classification with a variable number of layers. ResNet V2 101 implementation contains 101 layers. It gives excellent recognition results but is slower to train and slightly slower prediction speed. It is currently considered one of the best architectures for image classification. It is the current default architecture type for a project.

ResNet V2 50

ResNet short for Residual Network, is a family of network architectures for image classification with a variable number of layers. ResNet V2 50 implementation contains 50 layers. It is faster to train along with faster predictions, with only slightly lower recognition results compared to ResNet V2 101.

Inception V3

Inception v3 is the third edition of Google’s Inception Convolutional Neural Network. It can attain significant recognition accuracy. The model is the culmination of many ideas developed by multiple researchers over the years. It is based on the original paper: “Rethinking the Inception Architecture for Computer Vision” by Szegedy, et. al.

Mobile Net V2

Is a convolutional neural network architecture that seeks to perform well on mobile devices. 19 layers. It is specifically designed for use within mobile applications on the device (as opposed to an on-line AI service that requires an internet connection). Has faster training times than the other architectures, but its recognition accuracy isn’t quite as high as the others.

Testing percentage

You can set the percentage of the images within each entity labelled dataset that should be held back from training for testing accuracy of the AI during the training process. A smaller percentage can be selected if you have a limited number of images/regions per entity label. Test images are important as they are used to determine if the AI is continuing to gain prediction accuracy for each entity label during the training session. See the ‘Epochs’ help topic for more information regarding this.

Batch size

You can determine how many images (batch size) are pushed through each training cycle of your AI before its internal parameters are updated. At the end of each batch of images, the AI predictions are compared to the expected output and an error variable is calculated. From this variable, an update algorithm is used to then improve the model.

Batch size is used to limit the amount of data being processed at one time, as it is normally impossible to process all the images at once through the model.

Epochs

Defines the number of times the AI learning algorithm will work through the entire image training dataset. The AI training will automatically stop iterating through epochs when it no longer improves, when tested against the images held for testing. Setting this value too high can cause your AI to overfit (by learning patterns very specific to the training set), which may perform poorly on images outside of the training set.

Determining the best Batch Size and Epoch values

Unfortunately, there is no magic formula for determining the best values for these two parameters. You may need to try different values to determine what works best for your classification problem. The default values in most instances should give respectable results. If you are getting poor results, examine the number of images/regions for each entity label to ensure there is enough coverage of data for your classification needs. 50-100 images per entity label is normally adequate, with augmentation.

“Training complete” notification emails

Search and select from a list of users associated with your license. These selected licence members will be notified when an AI training session completes.

Note

Only valid licence users will receive notifications.

AI public

You can allow your AI to be accessible to the public via Lucidcentral AI services. Enabling this option does not make it visible in public listing.

Note

By default, all valid users on your licence who have at least read-only access to the project will have access to the AI regardless of the AI public or visibility settings.

AI publicly visible

You can make information (title, description and icon) about your AI project publicly visible via the Lucidcentral AI services. Making information about your AI project publicly visible doesn’t make it accessible to the public. To make your AI available to the public, mark your project as AI Public.

Editing a project

You may at any time edit the settings of your project. After making any adjustments, click the Save button to save the changes.

Deleting a project

A project can be deleted. If a project is deleted, all data associated with it along with the AI will also be deleted.

Do not delete a project if you have other services or applications that relies on the AI.

Since deleting a project can impact other services that rely on the AI, you must type in the name of the project to proceed with the deletion.

Warning

There is no undo for the project delete action.